Our categories

Lots of new products and product collections

Wall Art

Decor

Textiles

Kitchenware

Bathroom

Garden

Lighting

Aromatherapy

Storage

Seasonal Goods

Product collections

Explore product collections from our vendors

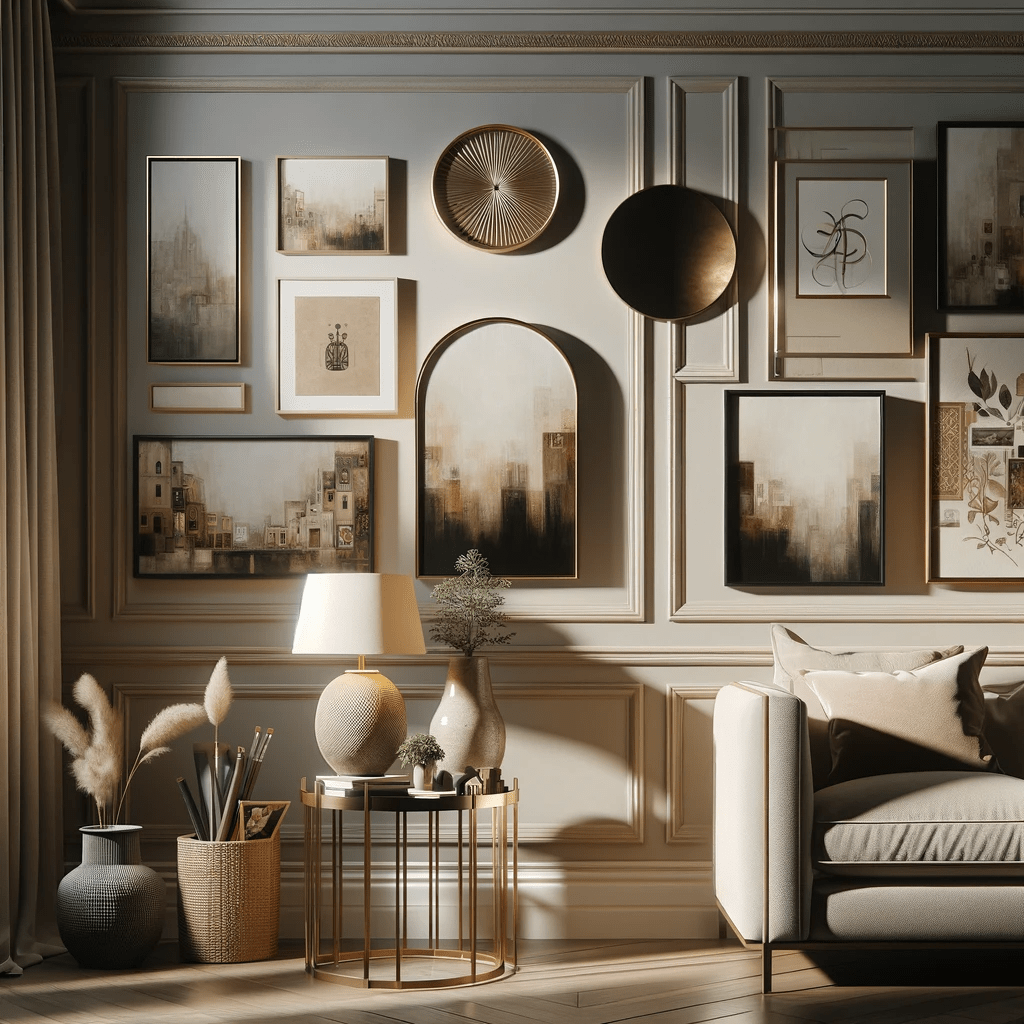

Furniture collection of the week

The most popular products from the collection

Rules for choosing furniture

Whether living on your own or with a family, your living room is an important space.

This room is where your family spends time together, and it is the room most of your guests will spend the majority of their time in. Choosing furniture that creates a pleasant, welcoming appearance while holding up against the wear and tear of everyday life is the key in getting this space to work for your needs.

-

Choose items in a single color scheme and style

-

Consider the area of the room

-

Do not buy unnecessary pieces of furniture

Latest articles

In the heart of Valencia

Ethimo mountain style

For clear thinking

The clean series

Flowing serpentines

Online store with a wide selection of furniture and decor

Furniture is an invariable attribute of any room. It is they who give it the right atmosphere, making the space cozy and comfortable, creating favorable conditions for productive work or helping to relax after a hard day. More and more often, customers want to place an order in an online store, when you can sit down at the computer in your free time, arrange the furniture in the photo and calmly buy the furniture you like. The online store has a large catalog of furniture: both home and office furniture are available.

Furniture production is a modern form of art

Furniture manufacturers, as well as manufacturers of other home goods, are full of amazing offers: we often come across both standard mass-produced products and unique creations - furniture from professional craftsmen, which will be appreciated by true connoisseurs of beauty. We have selected for you the best models from modern craftsmen who managed to ingeniously combine elegance, quality and practicality in each product unit. Our assortment includes products from proven companies. Who for many years of continuous joint work did not give reason to doubt their reliability and honesty. All of them guarantee the high quality of their products, excellent operational characteristics, attractive appearance of the products, a long period of use of the furniture, as well as safety.